Data Validation is one of those topics that can immediately raise stress levels. The reports can feel technical, the timelines are tight, and the outcomes aren’t clear. It’s often viewed as a compliance step tied to PEIMS. But in reality, it provides a structured process for reviewing data before accountability calculations take place. In this article, we examine how Data Validation and Accountability work together.

What “Accountability Data Validation” Actually Is

Accountability Data Validation is the process that the Texas Education Agency (TEA) uses to review data before finalizing accountability calculations. It’s not a rating, a consequence, or a judgment about performance. It’s a review of whether the submitted data aligns with the required rules.

Districts receive Data Validation reports that flag potential issues related to assessment participation and leaver records. These reports are designed to surface inconsistencies, missing information, or records that may require local review.

It is important to distinguish what Data Validation is and what it is not.

- Data Validation occurs before accountability calculations are finalized

- It is not the accountability system itself, and it does not determine ratings.

Data Validation matters because it affects the data that’s finalized for accountability calculations.

How Data Validation Connects to Accountability

The connection between Data Validation and accountability isn’t always obvious, but it is important.

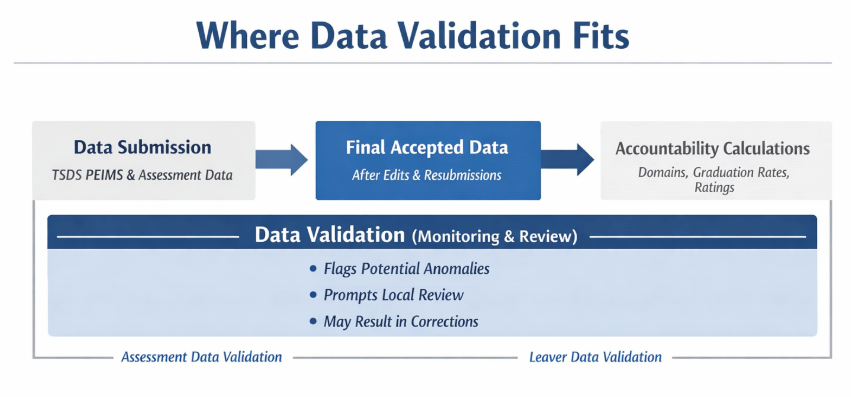

Both processes rely on the same underlying Public Education Information Management System (PEIMS) and assessment data. Data Validation is not a formal step in the accountability calculation process. Instead, it provides a structured way to review the data that will later be used in accountability calculations.

Student data is collected and submitted through the Texas Student Data System (TSDS) PEIMS and assessment systems. Those data points move through standard edits, rules, and timelines. Accountability calculations are later run using the final, accepted data according to the rules in the Accountability Manual.

Data Validation analysis is applied to the same data to flag potential anomalies that require local review. When indicators are triggered, districts review the records and confirm accuracy or submit corrections through standard resubmission processes when allowed. Corrections made during this period can change the data used later in accountability calculations.

Data Validation does not calculate accountability outcomes. It can influence them by shaping which data are finalized for accountability.

Which Data Validations Impact Accountability

Not all Data Validation reports have the same relationship to accountability. Some are informational. Others review records that directly feed accountability measures.

Two Data Validation areas are most closely connected to accountability outcomes:

- Assessment Data Validation

- Leaver Data Validation

Assessment Data Validation

Assessment Data Validation reviews assessment records for patterns that may show reporting issues or testing irregularities. These reviews use the same assessment data that appear in accountability calculations.

When indicators are triggered, districts review the underlying records and confirm accuracy or submit corrections through resubmission timelines when allowed. If assessment records are corrected, those changes can affect which students meet accountability subset criteria and which assessment results are included in measures such as Student Achievement, School Progress, and Closing the Gaps.

Assessment Data Validation does not determine accountability results. It can influence the data set used for accountability by identifying records that require review.

Leaver Data Validation

Leaver Data Validation examines PEIMS leaver records related to student exits, including graduates, dropouts, and continuing students. These reviews focus on whether leaver records meet required definitions and documentation standards.

Graduation and dropout rates used in accountability are calculated from the final accepted PEIMS leaver data after all standard submission and correction windows.

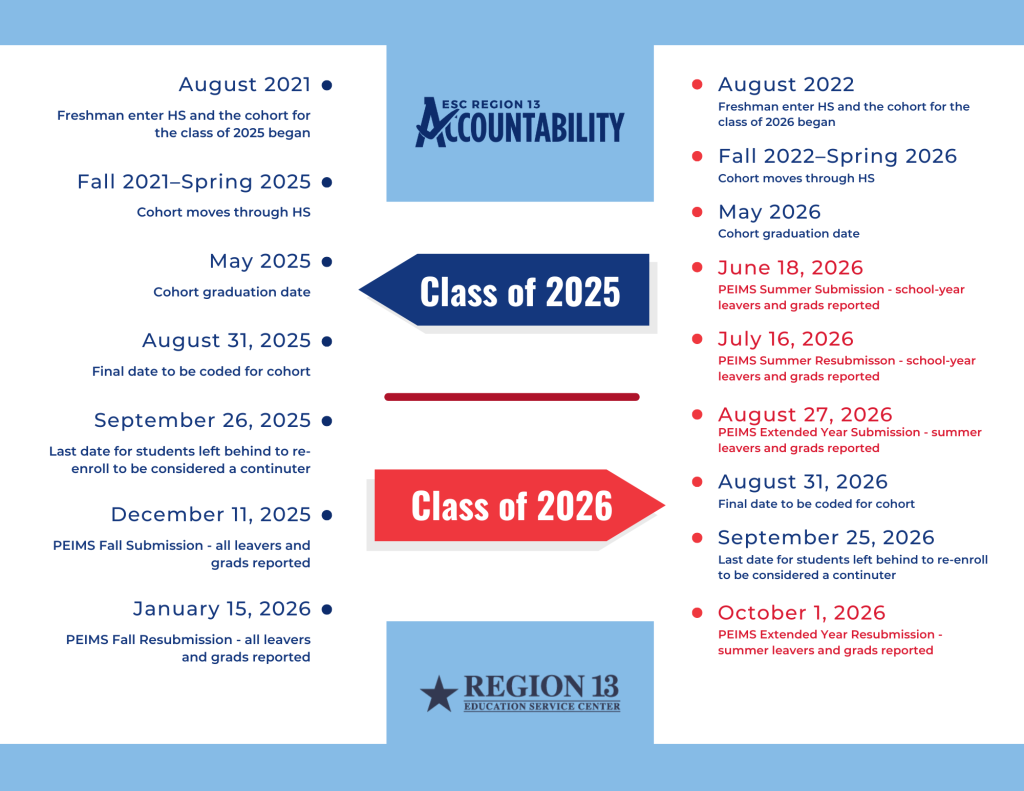

With recent changes to the leaver reporting timeline, Summer and Extended Year PEIMS submissions now serve as the primary source of current-year leaver and graduate data. Cohorts are finalized after the Extended Year submission, rather than at the fall submission point used in prior years.

The timeline below illustrates the difference between prior and current cohort finalization, showing how Summer and Extended Year PEIMS submissions now determine when cohorts are finalized.

As a result, graduation and dropout rates used in accountability are now based on leaver records finalized through the Summer and Extended Year PEIMS submissions, replacing the Fall submission that previously served as the cohort finalization point. Data Validation indicators may still appear across an extended window due to ongoing summer and extended-year reporting.

Data Validation indicators related to leaver records prompt districts to confirm or correct coding during those windows. There is no separate graduation data validation process beyond this review of leaver records.

Because graduation and dropout rates are used across multiple accountability measures, corrections to leaver data can affect accountability outcomes once final data are used.

Common Misconceptions

“This is just PEIMS.”

Data Validation is often associated with PEIMS because the data reviewed originates there. Its purpose, however, is to support accurate review of submitted data.

Data Validation flags potential anomalies in the same data that later feed accountability calculations. While it does not calculate ratings, it provides a structured process for reviewing and, when appropriate, correcting data that affect accountability measures.

Data Validation sits between data submission and accountability, even though it is not a formal step in the accountability calculation process.

Data Validation is a monitoring process; accountability inclusion rules (e.g., accountability subset) are defined in the Accountability Manual and are separate from Data Validation indicators, as shown in the graphic below.

“If we’re flagged, accountability is already affected.”

A Data Validation indicator does not imply that accountability outcomes have already changed, nor does it automatically imply that the data are incorrect.

Indicators signal the need for local review. In many cases, that review confirms the data are accurate. In other cases, corrections made through standard resubmission windows may change the final data used in accountability calculations.

Data Validation should be viewed as an opportunity to confirm data accuracy before accountability calculations are finalized, not as a determination of accountability results.

What Districts Should Be Doing Right Now

As Data Validation reports are released, districts should review the information provided and understand how it relates to data used for accountability.

This includes examining flagged records, identifying which validation area they fall under, and confirming whether the underlying data accurately reflects student participation, exits, or outcomes. When indicators are triggered, they may point to individual records that require review. They can also signal the need to look more closely at the systems and processes that produced the data.

Looking across flagged records can help determine whether an issue is isolated or reflects a broader data quality issue. In some cases, the review will confirm that no changes are needed. In other cases, corrections made through standard resubmission timelines may change the final data used in accountability calculations.

Questions that come up during this process are expected. Understanding why an indicator was triggered, whether it affects accountability data, and what review or correction options are available helps districts stay oriented as accountability calculations move forward.

Conclusion

Accountability Data Validation is best understood as a checkpoint in the larger accountability process—not a judgment about performance, but a structured opportunity to review the accuracy of submitted data before accountability calculations are finalized.

The accountability specialists at ESC Region 13 are always available to answer questions and help you through the process. Subscribe to our newsletter for updates, news, and the most current information on the A–F Accountability system. Visit our webpage for the latest professional development opportunities and downloadable resources or to connect with us.

Melinda Marquez is the Director of Accountability, Assessment, and Leadership Systems here at ESC Region 13.

Add comment